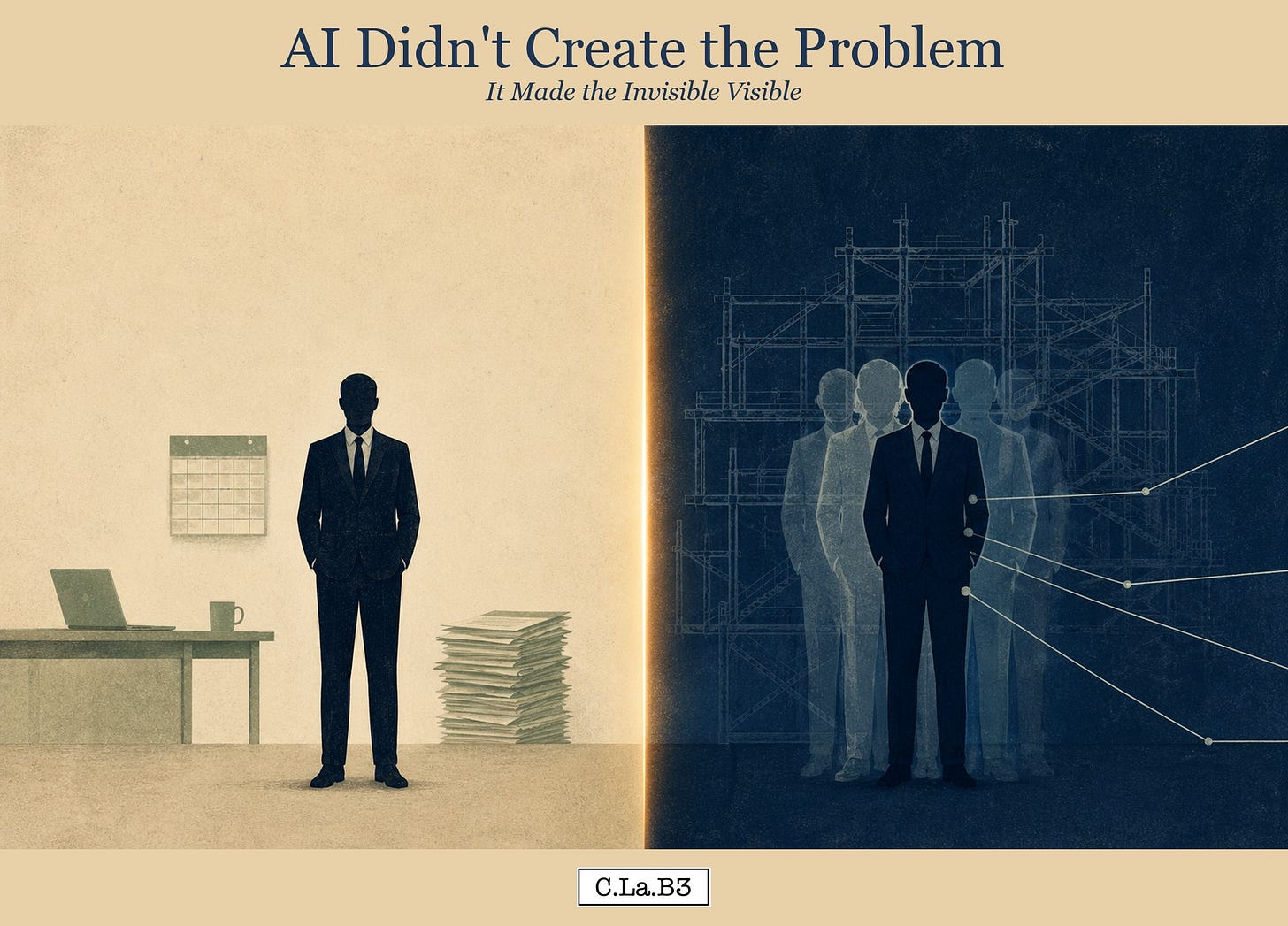

AI Didn't Create the Problem. It Made the Invisible Visible.

A reading of what AI has surfaced in your work, in your role, in the organization around you, and in yourself.

Article 1 · The Depth Layer Series

This is the first essay in The Depth Layer, a series that reads AI through the lens of systems psychodynamics. Each article moves between the personal and the organizational. What you are experiencing in your own work is examined through what the larger system is doing around you, because the two are not separate phenomena. The series gives you language and frame to read both at once.

Introduction

You have started using AI in your work. Some of it moves faster, some moves in ways you have not yet found your footing inside, and you are not always sure when to reach for it. Or you feel comfortable using it, and you wonder whether, when your colleagues see your work, they see you or the tool. Maybe you watch colleagues who are more fluent with AI produce in a day what takes you a week, and you wonder what that means about you. You wonder what is happening to your role. You wonder whether the next version of your career will still let you provide for the people who count on you. AI has surfaced something in your work, and you are not yet sure what it is.

This is a review of the self in a role when the tools and ways of working are evolving from day to day. The ground is moving under your feet. The series is a way to read that movement from the inside, not a technical walkthrough of which tool to pick or where to deploy it.

We then extend this review of the impact on you as an individual in this AI technological transformation to what is happening at the organizational level, because the same things that are happening to you are happening at scale. Enterprises have invested between thirty and forty billion dollars in generative AI. Ninety-five percent of those organizations report zero return (Challapally et al., 2025). “The difference was never the AI model,” the Stanford team writes. “It was always the organization” (Pereira et al., 2026). What you are noticing in your own work is a personal reading of an organizational pattern, and the pattern predates AI.

AI is a pattern-completion system. Quattrociocchi and colleagues put it formally: large language models are stochastic walks through high-dimensional graphs of linguistic transitions (Quattrociocchi, Capraro & Perc, 2025). They are not epistemic agents. They have no grounded experience, no motivation, no causal reasoning of their own.

That has a human consequence. AI completes the patterns already present in what you bring to it: your language, your framing, the documents your organization trained it on, the assumptions you carry into the prompt. The system mirrors; it does not originate. The output is a higher-definition version of what was already there. What you feel through AI is what your organization has been carrying all along, now legible to you.

AI did not create the problem. It made the invisible visible.

This essay begins that reading. You will leave with the language to name what AI has surfaced in your work, in your role, in the organization around you, and in yourself, and a frame to work with it rather than be moved by it.

Three Tasks Running at Once

You sit down to use AI for a piece of work you have done many times. You describe what you want it to produce. As you type the prompt, you realize that what you are asking for is not what you actually do. There is the normative task, the role as your job description defines it. There is the existential task, the role as you think of yourself doing it. There is the phenomenological task, the role your actual behavior has been carrying out. Until AI asked you to name one of them for a machine, the three were never distinct.

Systems psychodynamics has carried this frame for decades.

“The normative, or formal or official, primary task” is the role as it is described on paper. The existential task is “the client’s interpretation of and meaning that they give to the task of their role and activities.” The phenomenological task is “the task that can be inferred from people’s behaviour and of which they may not be consciously aware” (Lawrence, 1977; Brunning, 2006; Obholzer & Roberts, 1994).

Rice named the primary task long before this three-level distinction:

“The work that must be done if the organisation is to survive” (Rice, 1958).

Most of professional life has been organized to keep these three close enough that nobody has to distinguish them. This is a social defense. But AI cannot hold the three together. A pattern-completion system works only on what is articulated and given to it. When you ask AI to help with a piece of work, the description you give is almost always closer to the normative task than to the other two. The meaning you bring and the behavior you actually exhibit are not in the prompt, and so they are not in the output.

The data is beginning to show this. Dillon and colleagues ran a field experiment across sixty-six firms and 7,137 knowledge workers randomly given Microsoft Copilot. Workers saved two hours a week on email.

“Apart from these individual time savings, we do not detect shifts in the quantity or composition of workers’ tasks” (Dillon, Jaffe, Immorlica & Stanton, 2025).

The task was performed faster; it was not performed differently. To have been performed differently, the existential and phenomenological tasks would have had to enter the prompt. They did not, because nobody had ever needed to articulate them. Stanford’s study of fifty-one enterprise AI deployments found the same pattern at scale:

“77% of the hardest challenges practitioners faced were invisible costs: change management, data quality, and process redesign, not technical issues. Technology was consistently described as the easiest part” (Pereira, Graylin & Brynjolfsson, 2026).

What was exposed was everything that had been carrying the work without being named.

Until now, the gap between your three tasks could be lived with, or what lines separated them were blurred and could simply be dismissed. AI, by requiring a single articulated task at the front end, turns the gap into an operational question.

Once the task is in the open, a second question comes with it. What structure had been keeping the divergences manageable?

The Architecture No One Knew They Were Depending On

The anxiety underneath the three tasks is older than your role. It is the dread of being seen doing something other than what you said you were doing, the recognition that the version of your work you can articulate is partial, and the worry that if the gap were named, the people around you would lose the version of you they had been working with. The parts you cannot articulate are the parts that matter most.

This anxiety is structural. Every organization carries it, and every organization develops architecture to hold it.

Isabel Menzies Lyth named the architecture in a 1960 study of nursing in a British teaching hospital. The procedures, hierarchies, rotations, and language patterns of the nursing service had a hidden function: protecting nurses from the unbearable affect of caring for dying patients. The structure metabolized anxiety the staff could not metabolize alone. Strip the structure away, and the anxiety surfaces raw.

Elliott Jaques described the same phenomenon five years earlier in Klein’s edited volume on psychoanalysis:

“Social systems as a defence against persecutory and depressive anxiety” (Jaques, 1955).

Jaques argued the same dynamic applies to all social systems. Wilfred Bion extended the frame to group dynamics. A functioning group operates as what he called a work group, organized around its task. When the group cannot tolerate its own anxiety, it slips into a basic assumption mode: dependence on a leader who will save it, fight against an enemy that explains it, or pairing toward a future event that will resolve it (Bion, 1961). The basic assumption mode is defense in the form of work.

The three tasks were held together by exactly this kind of architecture. Job descriptions, meeting cadences, reporting lines, the shared vocabulary that lets you describe your role in terms close enough to your actual behavior that nobody has to confront the divergence. The architecture was load-bearing. You did not know it was load-bearing because you never had to ask what it was holding up.

AI is dissolving the architecture in three places at once.

Dell’Acqua and colleagues ran a field experiment with consultants at a global firm that showed how AI is dissolving the silos that once kept expertise apart. Without AI, R&D professionals proposed technical solutions and commercial professionals proposed commercial ones. With AI, both groups produced balanced solutions regardless of background.

“AI breaks down functional silos” (Dell’Acqua et al., 2025).

The lived consequence is that the boundaries which defined what each professional was for are no longer doing the work they used to do.

It is rewiring the informal network that ran underneath the formal one. Buchsenschuss and colleagues studied 316 employees randomly given a grounded AI assistant in a European technology firm. Those with the assistant became more central in both collaboration and knowledge networks. AI acted as a translator that lowered the cost of collaboration and as a catalyst that made individuals more valuable as knowledge sources (Buchsenschuss et al., 2026).

Interactions reorganized around who the assistant made more useful, not around who had been carrying the relational architecture before.

It is constructing a new presence inside the organization that has its own developing identity. Mohanty and Grundstrom followed a machine learning system in a wind energy company for twenty-eight months. The system’s identity was constructed in motion, negotiated through interactions with domain experts who had to decide what the system was for, what it could be trusted with, what it counted as.

They named the dynamic the “performativity of AI identity as a longitudinal, dynamic, and socially constructed process” (Mohanty & Grundstrom, 2025).

A new actor entered the organization, and the architecture had to accommodate it.

The architecture here is being adapted to a new purpose. The anxiety it had been containing is now reaching you.

Once it reaches you, the organization does something with it.

What the Organization Needs AI to Be

When the anxiety the architecture had been containing reaches the organization, the organization does not sit with it. It does something simpler: it projects.

Projection is the technical name for the act of attributing what is happening inside to a cause outside. Melanie Klein traced the mechanism back to infancy. To manage early anxiety, the infant divides experience into a good part, which it tries to own, and a bad part, which it pushes outside itself into an object.

“The processes of splitting apart the self and projecting them into objects are thus of vital importance in normal development as well as for abnormal object relations” (Klein, 1946).

Organizations do the same thing. The dysfunction that was always there, now made legible by AI, gets attributed to AI itself.

The projections take three recognizable shapes, and AI provokes all three at once, across different parts of the same organization.

The first is dependence. AI becomes the savior that will solve the problems leadership has not solved. The procurement decision becomes a strategy. A roadmap to deployment substitutes for a theory of what the organization is for. Engagement with AI becomes a way to avoid engagement with everything else.

The second is fight-flight. AI becomes the threat to be attacked or fled from. The attack takes the form of compliance memos, governance frameworks built before there is anything to govern, and selective citation of the case for refusal. The flight takes the form of a policy that forbids in private what executives endorse in public, or outright refusal to use AI tools in work entirely.

The third is pairing. The organization pins its hope on a future event that will resolve the present. The next model release. The next vendor. The next reorganization that will arrange the right people around the right tools. The pairing keeps the present in suspension. Nothing has to be confronted now, because the resolution is coming.

All three projections share a quality. Each makes AI into something that lets the existing architecture stay standing. The savior does the work the architecture cannot do. The threat justifies the architecture’s defensive consolidation. The future event lets the architecture postpone confrontation. What the organization needs AI to be, in every case, is something it can metabolize without changing.

The most common projection is the small one: AI as a neutral instrument, a productivity layer that does not change how the work is done. Workers use AI to do their existing work faster. The same pattern shows up at population scale. Humlum and Vestergaard studied 25,000 workers across 7,000 workplaces in Denmark and found “precise zeros” on earnings and recorded hours despite widespread adoption (Humlum & Vestergaard, 2025). The technology arrived. The work did not change. The defensive adoption is the projection of AI-as-tool, held just firmly enough that the existing arrangements can stay in place.

The projection eventually meets its contradiction. Mohanty and Grundstrom’s ethnography is the case in point. Organizations need AI to be a thing, predictable and controllable, the noun a procurement contract names. What domain experts ended up doing, day by day, was negotiating what the system was for, what it could be trusted with, what it counted as. The AI-as-thing projection collapsed against the AI-being-constituted-relationally that practice produced. The need was for an instrument. What arrived was an actor. An actor stays in the system between uses. It develops patterns nobody assigned to it. The instrument projection was the architecture’s way of refusing relationship in advance, but relationship is the form an actor takes regardless.

The organization can hold the projection for a while. It cannot hold it forever. The gap between what the organization needs AI to be and what AI keeps demonstrating it is widens with use. At some point, the projection breaks open, and what was being projected outward gets thrown back inward. The internal map the leader had been carrying, the picture each part of the organization held of the whole, becomes visible to itself for the first time.

The Map That Became Public

When the projection breaks, the first thing exposed is a map. Every leader in the organization has been carrying one. None of them have ever been compared.

David Armstrong called this map the “organisation in the mind.” It is the picture each leader holds, half-conscious, of what the organization is, what it does, and how its parts connect. The picture is built from years of role experience, conversation, observation, and the sediment of past decisions. It is real to the leader who carries it, and it operates in every interaction they have.

“Organisation in the mind is the picture which all organisational members and others will have in mind when interacting across boundaries” (Armstrong, 2005).

The maps are never identical between leaders. They are usually never compared. The architecture that held the three tasks together also kept the maps separate, because as long as nobody compared the maps, the divergences could stay invisible.

AI implementation forces the comparison. To deploy a model, the organization has to agree on what counts as a customer, what counts as the process, what counts as the data, what counts as a decision. The maps become visible to each other for the first time. Each leader is shocked to discover that the picture in their head is not the picture in their colleagues’ head because they assumed the others were carrying roughly the same thing.

The empirical signature of map alignment is strategic clarity, and it predicts adoption with unusual force. Christos Makridis studied a panel of 30,000 employees across organizations and found that perceived strategic clarity is the dominant correlate of frequent AI use. Employees in clear-strategy organizations are roughly 26 percentage points more likely to report frequent use (Makridis, 2026). Only 15 percent of employees say their employer has communicated a clear AI strategy. When that plan is in place, employees are nearly five times more likely to feel comfortable using AI.

Strategic clarity is the visible evidence that the organisation-in-the-mind work has been done. When leaders have aligned their internal maps enough to articulate a shared strategy, employees inherit a coherent picture of where AI fits and what it is for. Where the alignment has not been done, the strategy reads as a slogan, employees default to the small projection of AI as a productivity layer, and adoption stalls in the gap between the published roadmap and the leaders’ actual maps.

The blindness operates upstream of strategy. Aakash Sapru identified sociotechnical blindness as one of four dimensions of resistance to AI: the inability to see the system the technology is embedded in. The blindness is a feature of how organizations train people to perceive their work. Each leader sees their own function clearly and sees the other functions through the lens of their own. The map of the whole is something the architecture never required anyone to construct.

When the projection breaks and the maps become public, the organization is faced with a different problem than it thought it had. The organization thought the problem was AI adoption. The work in front of it is map construction. The leaders who can lead AI adoption are the leaders who can hold the work of comparing maps, naming the divergences, and constructing a picture of the whole that the organization can act on together.

The data suggests that most cannot do this. The capacity has not been developed because it has never been required. Those who can are visible in the data.

The Five Percent

The data is asymmetric. Five percent of organizations capture the value AI is supposed to create. Ninety-five percent do not. The Stanford team studied the five percent in detail.

Pereira and colleagues followed fifty-one successful enterprise AI deployments over five months. The deployments varied widely in technology, vendor, and use case. What predicted success was constant across the variation. “The difference was never the AI model,” they wrote. “It was always the organization. Its readiness, its processes, its leadership, its willingness to change and fail” (Pereira et al., 2026). What was treated as a slogan in the opening is now a finding.

What distinguished the successful organizations was the work they had done before the technology arrived. Seventy-seven percent of the hardest challenges the practitioners reported were invisible costs: Change Management, Data Quality, Process Redesign. Technology was consistently described as the easiest part. The successful organizations had already done the change management. They had already cleaned the data. They had already redesigned the processes. The technology landed on infrastructure that could hold it.

The unsuccessful organizations had not. The same study found that resistance was structurally embedded. Staff functions (Legal, HR, Risk, Compliance) were the most frequent source of resistance, accounting for thirty-five percent of all resistance encountered. Resistance came from the parts of the organization whose primary task is to manage organizational risk, who see clearly that the maps are misaligned, that the projections are operating, that the architecture is not ready for what AI requires of it. The resistance is the organization protecting itself from a deployment it knows it cannot absorb.

The Atlanta Fed surveyed seven hundred and fifty corporate executives across the United States economy. They found a productivity paradox: perceived productivity gains exceeded measured productivity gains. Executives are reporting more value from AI than the data shows AI is producing. The gap is the projection meeting the measurement. Larger companies anticipate AI-driven workforce reductions. Smaller companies expect modest gains. Both projections precede the data that would justify either of them (Baslandze et al., 2026).

The Denmark study reaches the deepest. Humlum and Vestergaard found modest productivity gains of 2.8 percent on average, combined with weak wage pass-through. Even when AI delivered productivity, the productivity did not flow into earnings or hours. The organization absorbed the gain back into existing arrangements. The architecture held.

The five percent are the organizations that did the depth work before AI arrived. They had articulated their three tasks. They had named the architecture they were depending on. They had withdrawn the projections. They had compared the maps. AI arrived and had something to map onto, because the organization had already mapped itself.

The ninety-five percent are organizations that hoped AI would do the depth work for them. They needed AI to be the savior, the threat, the future event, the neutral instrument. The work is still in front of them.

It is also in front of you.

Closing

The work in front of you is the same work in front of the organization, beginning at a smaller scale.

You can articulate your three tasks. You can name the normative description of your role, the meaning you carry into it, and the behavior you actually exhibit, and you can let the divergences become real to you. You can name the architecture you have been depending on, the routines and language patterns and meeting cadences and shared vocabulary that held the divergences manageable. You can notice your own projections onto AI, the savior, the threat, the future event, the neutral instrument, and you can let the projections soften enough to see what is underneath. You can compare your map to the maps the people around you carry.

AI cannot do this work for you. It completes patterns. The patterns you bring to it are yours to construct.

What you are doing when you take up the work is the work the architecture had been doing for you. You are taking it back. You are placing the load-bearing function inside yourself rather than inside the structure around you. The architecture may continue to fail. Your capacity to hold the work it had been holding is what you can build.

This is the recognition step. The next articles in this series go deeper. The threat AI poses to your identity. The liminal space you are crossing through. The shadow organization that no implementation plan accounts for. The containment work you will be asked to do for others. The horizon you cannot see from where you are now.

Each of those depends on the work of recognition. You cannot work with what you cannot see.

AI did not create the problem. It made the invisible visible. The invisible is now yours to read.

For Reflection

These questions are an invitation, not a checklist. Sit with the ones that catch.

Where in your current role can you name the divergence between what your job description says, what you think you do, and what your behavior actually carries out?

What routines, language patterns, or shared vocabularies in your work have you come to rely on without ever asking what they hold together?

When you encounter AI in your work or your organization, which of the four projections do you reach for first: the savior, the threat, the future event, or the neutral instrument? What is that projection protecting you from?

When was the last time you compared your internal picture of the organization to the picture a colleague carries? What surfaced in the comparison?

Where in your own AI use are you doing the same work faster rather than doing different work?

What single piece of articulation, alignment, or projection-withdrawal could you do this week that would change how AI lands in your work?

If the architecture you have been depending on continues to fail, what part of its load are you ready to carry yourself?

If what you have read here struck a chord, there are a few ways we could take this conversation further:

Talks and Offsites – If you are shaping a leadership retreat or planning a gathering where you want people to think differently about themselves and the systems they inhabit, I can help spark that dialogue.

Executive Coaching – For leaders navigating complexity, change, or the unspoken dynamics in their roles, I offer one-to-one coaching as a thinking partner in the work.

You can reach me directly if one of these feels like the right next step.

References

Armstrong, D. (2005). Organization in the mind: Psychoanalysis, group relations and organizational consultancy. Karnac.

Baslandze, S., Edwards, Z., Graham, J. R., McClure, T., Sparks, M., Meyer, B., Waddell, S. R., & Weitz, D. (2026). Artificial intelligence, productivity, and the workforce: Evidence from corporate executives (Federal Reserve Bank of Atlanta Working Paper No. 2026-4). Federal Reserve Bank of Atlanta. https://doi.org/10.29338/wp2026-04

Bion, W. R. (1961). Experiences in groups and other papers. Tavistock Publications.

Brunning, H. (Ed.). (2006). Executive coaching: Systems-psychodynamic perspective. Karnac.

Buchsenschuss, R., Koch-Bayram, I., Biemann, T., & Puranam, P. (2026). The impact of generative AI adoption on organizational networks: Evidence from a field experiment (INSEAD Working Paper No. 2026/01/STR). INSEAD. https://ssrn.com/abstract=6028034

Challapally, A., Pease, C., Raskar, R., & Chari, P. (2025). The GenAI divide: State of AI in business 2025. MIT NANDA. https://www.media.mit.edu/groups/nanda/overview/

Dell’Acqua, F., Ayoubi, C., Lifshitz, H., Sadun, R., Mollick, E., Mollick, L., Han, Y., Goldman, J., Nair, H., Taub, S., & Lakhani, K. R. (2025). The cybernetic teammate: A field experiment on generative AI reshaping teamwork and expertise (NBER Working Paper No. 33641). National Bureau of Economic Research. https://www.nber.org/papers/w33641

Dillon, E. W., Jaffe, S., Immorlica, N., & Stanton, C. T. (2025). Shifting work patterns with generative AI (NBER Working Paper No. 33795). National Bureau of Economic Research. https://www.nber.org/papers/w33795

Humlum, A., & Vestergaard, E. (2025). Large language models, small labor market effects (University of Chicago Booth School of Business Working Paper No. 2025-56). University of Chicago Booth School of Business.

Jaques, E. (1955). Social systems as a defence against persecutory and depressive anxiety. In M. Klein, P. Heimann, & R. E. Money-Kyrle (Eds.), New directions in psycho-analysis (pp. 478-498). Tavistock Publications.

Klein, M. (1946). Notes on some schizoid mechanisms. International Journal of Psycho-Analysis, 27, 99-110.

Lawrence, W. G. (1977). Management development: Some ideals, images and realities. Journal of European Industrial Training, 1(2), 21-25. https://doi.org/10.1108/eb002269

Makridis, C. A. (2026). Organizational transmission of AI: Managerial influence on generative AI adoption (CESifo Working Paper No. 12373). CESifo. https://papers.ssrn.com/sol3/papers.cfm?abstract_id=6053356

Mohanty, P., & Grundstrom, C. (2025). The making of AI’s identity for organizational transformation: An ethnographic study. ICIS 2025 Proceedings. Nashville, Tennessee, USA.

Obholzer, A., & Roberts, V. Z. (Eds.). (1994). The unconscious at work: Individual and organizational stress in the human services. Routledge.

Pereira, E., Graylin, A. W., & Brynjolfsson, E. (2026). The enterprise AI playbook: Lessons from 51 successful deployments. Stanford Digital Economy Lab. https://digitaleconomy.stanford.edu/publication/enterprise-ai-playbook/

Quattrociocchi, W., Capraro, V., & Perc, M. (2025). Epistemological fault lines between human and artificial intelligence [Preprint].

Rice, A. K. (1958). Productivity and social organisation: The Ahmedabad experiment. Tavistock Publications.

Sapru, A. (2026). Psychological resistance to AI: How regulatory focus fuels AI anxiety and negative attitudes toward AI. Technology in Society, 86, 103251. https://doi.org/10.1016/j.techsoc.2026.103251